If you're producing ads, “character consistency” is not an aesthetic preference. It is a brand control requirement. The moment your spokesperson’s face, hair, or wardrobe drifts between variants, you lose the thing that makes campaign creative scalable: viewers stop recognizing the person, and your edit set stops feeling like one campaign. Teams often respond by generating more and picking the closest match, but that is a volume strategy, not a controllability strategy.

This article is about building consistent character ads as a workflow. The goal is not to create one perfect clip; the goal is to create a system where you can produce 10–100 variants (hooks, CTAs, languages, scenes) while keeping the spokesperson stable. Scope: as of February 2026, focused on short-form ad production rather than long narrative filmmaking.

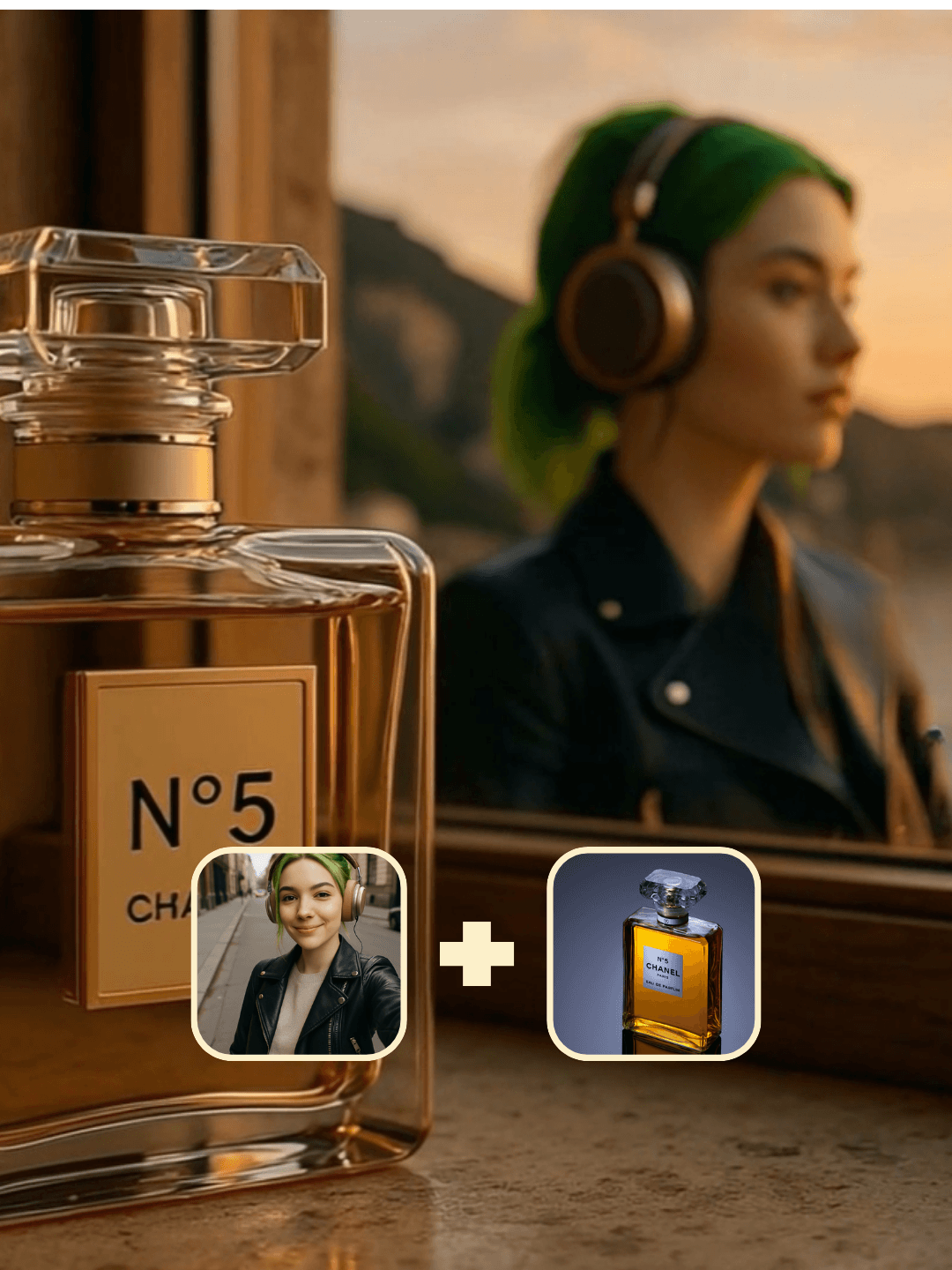

Quick answer: consistency is an asset problem, not a prompt problem

If you rely on prompts alone, the model will re-invent the person on every run, because it is optimizing the scene, not preserving identity. The stable approach is to turn identity into an explicit asset: a reference sheet (multi-angle images) and a short identity description that you reuse across all variants. Once identity is upstream, you can vary the downstream layers (scene, motion, copy) without losing the face.

This is why workflows win for ad production. They turn “one good generation” into a reusable system.

Why characters drift (and why “be consistent” doesn’t work)

Character drift is mostly variance compounding. Every generation contains noise, and every time you ask for a new scene or new motion, you give the model new reasons to reinterpret facial structure, hair shape, and wardrobe details. Without an identity anchor, it treats those as flexible degrees of freedom.

The drift is often subtle at first: a jawline angle changes, eyeliner thickness shifts, hair parting swaps. But when you string 8 variants together, the subtle drift becomes obvious, and the campaign looks like eight different creators.

If your production goal is variants, you should assume drift will happen and design the workflow to prevent it rather than trying to “out-prompt” it.

The workflow structure: identity upstream, variants downstream

A production-ready consistent character workflow is easiest to understand as three layers.

Layer 1 is identity: a reference sheet and a short do-not-change spec (face structure, hair, signature outfit elements). This is what you reuse across the entire campaign.

Layer 2 is message and structure: the script, hook pattern, CTA, subtitle format, and pacing. This layer is where you create variants on purpose.

Layer 3 is rendering: scene, camera, motion, and export specs (vertical, safe margins). This layer changes per platform, but it should not change identity.

The practical advantage is debuggability: if a variant drifts, you can rerun only the render stage with stricter identity conditioning, instead of reinventing the full creative.

Start from a template that is already optimized for consistency

OpenCreator’s Consistent Character Ads template is designed around the exact ad problem: you want a stable spokesperson and fast iteration on variants.

Start here: Consistent Character Ads template.

If you want a deeper, asset-first approach to identity locking, build or generate a character reference sheet first and reuse it in downstream ad workflows:

AI character reference sheet guide.

What to standardize first (so variants feel like one campaign)

Many teams try to standardize everything and end up with a rigid template that can't adapt. A more effective approach is to standardize only the parts that drive recognition and production speed.

Standardize identity first. Then standardize subtitle style and export specs. Finally standardize the variant structure (hook, proof, CTA). Scenes and camera can vary more, as long as they don't force the system to rebuild the face.

If you do this in the opposite order (standardize scenes before identity), you end up with a beautiful set of clips that look like different people.

When consistency is still unstable (and how to fix it without “generate 200 times”)

If identity still drifts, it usually means your anchor is too weak for the amount of variation you introduced. The first fix is to reduce degrees of freedom: keep the same outfit, keep similar framing, keep similar lighting. The second fix is to make identity more explicit: use multi-angle references, not just a single portrait. The third fix is to localize change: do not change scene, camera motion, and script all at once in the same iteration loop.

A simple production habit is to create variants in batches where only one layer changes per batch: copy variants with same scene, then scene variants with same copy, then camera variants. That sequencing turns debugging into a controlled experiment rather than a guessing game.

Related: consistency across motion, not just still frames

Still-image consistency is already hard. Video consistency is harder because drift can happen frame-to-frame. If your goal is consistent motion as well as identity, treat motion as an asset too (reference performance), not something the model should invent every time.

For motion-driven reuse, this guide is the closest motion-asset primitive: Kling Motion Control (motion transfer). For camera-driven consistency, pair your variants with storyboard structure: AI storyboard and multi-camera workflow.

FAQ

Is character consistency mainly a model choice?

Model choice matters, but most drift is a workflow problem. Without identity assets and stable conditioning, even strong models will drift as you introduce new scenes and motion.

Do I need a reference sheet or is one portrait enough?

One portrait can work for a single clip. If you want variants, use a reference sheet. Multi-angle reference reduces drift because it removes ambiguity about the face structure.

What should I vary first when building ad variants?

Vary copy first (hooks/CTAs) with identity and scene fixed. Then vary scene. Then vary camera motion. Changing everything at once makes it hard to diagnose drift.

How do I keep the campaign feeling cohesive even when scenes change?

Keep the spokesperson stable and standardize subtitles and export specs. Those two create recognition and cohesion even across different environments.